What Is ETL in the Context of Data Analytics: A Beginner’s Guide

Businesses collect an insane amount of data from dozens of different sources every day. The biggest issue is that customer information sits in one system, sales data in another, inventory data in another and so on.

ETL—which stands for Extract, Transform, Load—is the process that pulls data from all these different sources, cleans it up, and puts it in one place where you can actually use it for profit-driving insights.

Without ETL, your data will remain scattered across different systems in different formats. Think of it like pumping raw oil out of the ground without refining it.

ETL solves this by creating a single source-of-truth for your organization.

This article walks through what ETL is in the context data analytics. You’ll see what happens in each step, how ETL has changed over time, and what tools can help you manage your data.

Whether you’re new to data analytics or just want to improve your current setup, understanding the ETL process is a good place to start!

Understanding ETL in Data Analytics

ETL is the starting point and acts as the backbone of data analytics. It lets organizations pull information from different sources, standardize it, and prepare it for analysis. That analysis could be with a data visualization tool like Power BI or as a refined data source for an AI like SkAI.

This process turns scattered, inconsistent data into a unified format that supports business intelligence and smarter decisions.

Definition and Core Concepts

ETL stands for Extract, Transform, Load—a data integration process that combines information from multiple sources into a single, source-of-truth. You use ETL to move data from various systems into a target data warehouse or data lake where it becomes accessible for analytics.

Your data often lives in different places with different formats. Customer info might exist in a CRM, sales data in an ERP, and web traffic in analytics tools. ETL brings all this information together.

At its core, ETL focuses on three key principles. First, it ensures data quality through cleansing and validation.

Second, it creates unified data by applying consistent business rules across all sources.

Third, it prepares data for analytics by organizing it into formats that support reporting and business intelligence.

The ETL Process: Extract, Transform, Load

Extract is the first stage where you pull raw data from source systems. These sources include SQL databases, CRM platforms, APIs, flat files, spreadsheets, web pages, etc.

The data often moves to a staging area—a temporary location where transformation happens.

Transform is where the real work or “magic” happens. You apply transformation logic to clean, structure, and prepare your data. Transformation can occur before database insertion, after database insertion, or further downstream in query editors inside Power BI or Tableau).

This includes data cleansing to remove duplicates and errors, data validation to ensure accuracy, and data mapping to align fields across systems. You might also convert currencies, standardize dates, or calculate metrics. Helper tables are often required to bring it all together.

Load moves the transformed data into your target data warehouse. You usually start with an initial load, then perform incremental updates to add new or changed records (daily, hourly, etc.)

Most organizations schedule these loads during off-hours to minimize system impact.

ETL’s Role in Modern Analytics

ETL pipelines lay the foundation for analytics and business intelligence. Without solid data integration, reports can’t be trusted and decisions might rest on shaky ground.

The process enables data consolidation from both legacy systems and modern applications. You get a single source of truth that cuts down on conflicting reports and data silos. It also bridges across time preserving history when a company has migrated systems over the years.

Good ETL processes allow your analytics teams to focus on finding insights instead of searching for data, massaging it manually, or questioning its accuracy. ETL has evolved with cloud computing to handle bigger data volumes and faster processing.

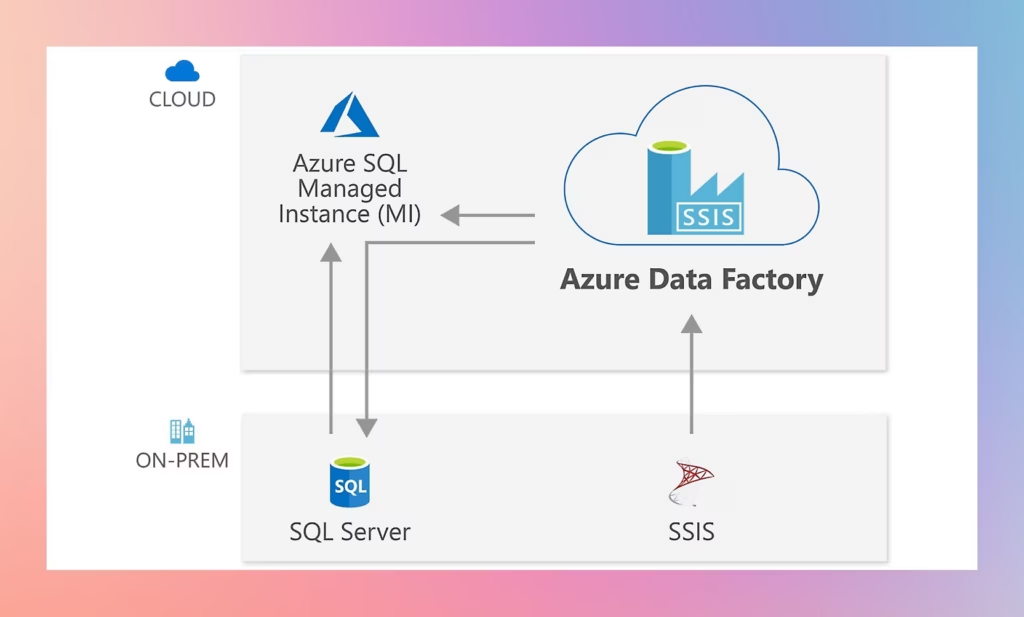

What is nice about cloud-based ETL solutions, such as Azure Data Factory, is you easily scale resources up or down as needed.

What is ETL: Azure Data Factory

Key Entities and Components

Several key parts work together in your ETL pipeline:

Data Sources: Where your raw data comes from—transactional databases, applications, external feeds.

Staging Area: A temporary storage spot where data transformation happens before loading into the final destination.

Transformation Engine: The software that applies your business rules, cleans data, and maps fields.

Target Data Warehouse: The final home for transformed data, ready for analytics and reporting.

Modern ETL tools offer visual interfaces for building data pipelines without much coding. There are many no-code and low-code solutions out there. Although very far from perfect, these solutions can be helpful for those just getting started in their data analytics journey.

Truthfully, you will eventually need to graduate to fully coded/managed ETL processes as low code and visual platforms struggle and lack the control necessary for more complex processes.

Traditional ETL vs. Modern Approaches

Traditional ETL follows a strict extract-transform-load sequence. You pull data from sources, transform it separately, and then load it into a data warehouse.

This approach works when you need tight control over data quality before it reaches your destination.

Modern approaches sometimes include ELT (extract-load-transform), where you load raw data first and transform it inside powerful cloud data warehouses like Azure SQL, Snowflake, or BigQuery. ELT taps into the processing power already built into these platforms.

The main difference is timing and location. Traditional ETL transforms data before loading, which protects your warehouse from bad data. ELT loads data first, which is faster but relies on your destination system’s ability to handle transformations. The latter has the benefit of seeing all the data in the data warehouse pre- and post- transformation, but also has an additional processing costs in terms of virtual cores needed.

Neither is always better. You might use traditional ETL for sensitive data that needs validation. ELT makes sense for massive datasets in the cloud where compute power is cheap and scalable.

Frequently Asked Questions

ETL processes support data analytics by cleaning data, combining information from multiple sources, and preparing it for analysis. These systems follow specific steps to move data and address common technical challenges.

How do ETL processes enhance data analytics projects?

ETL processes make your data ready for analysis by combining information from different sources into one location. When you extract data from various systems, transform it into a consistent format, and load it into a central warehouse, you create a single source of truth for your organization. This is incredibly valuable!

Your analytics projects become more reliable because ETL cleans and validates data before you use it. The process removes duplicates, fixes errors, and ensures all data follows the same format.

This means you spend less time cleaning data and more time finding insights.

ETL also helps you work with historical data more easily. The process can track changes over time and maintain records of how your data has evolved.

You can run complex queries faster because the data is already organized and structured for analysis.

What are the primary components and steps of an ETL workflow?

The extract step pulls raw data from your source systems. You can extract from databases, spreadsheets, cloud applications, and many other sources.

This data moves to a staging area where it waits for processing.

The transform step changes your data into the format you need. You can filter out unwanted records, combine data from different sources, and fix inconsistencies.

Common transformations include removing duplicates, converting data types, calculating new values, and standardizing formats.

The load step moves your transformed data into its final destination. This is usually a data warehouse or data lake where you can run analytics.

Most organizations load all their data first, then set up regular updates to add new information as it becomes available.

Can you compare and contrast ETL with ELT regarding performance and use cases?

ETL transforms data before loading it into your target system, while ELT loads raw data first and transforms it later. Your choice between these methods depends on your data volume and processing needs.

ETL works well when you need to clean data before storing it or when working with smaller data sets. You can control exactly what data enters your warehouse and ensure it meets quality standards.

This approach requires more planning upfront because you must define transformation rules before loading data.

ELT handles large volumes of unstructured data more efficiently. You can load data quickly without waiting for transformations to complete.

Cloud data warehouses with strong processing power make ELT practical because they can transform massive amounts of data after loading it.

What are some common challenges faced during ETL implementations?

Data quality issues create major problems during ETL. Your source systems may contain duplicate records, missing values, or inconsistent formats.

You need to build transformation rules that handle these problems without losing important information.

Performance bottlenecks slow down your ETL processes when dealing with large data volumes. Moving and transforming millions of records takes time and computing resources.

You must schedule ETL jobs during off-peak hours or find ways to process data more efficiently.

Maintaining ETL pipelines takes ongoing work as your data sources change. When source systems add new fields or change data formats, your ETL processes must adapt.

You also face challenges integrating data from different systems that use incompatible formats or conflicting business rules.

How does ETL differ from traditional SQL queries in data handling?

SQL queries pull data straight from databases when you need it. You write a query, the database handles it, and you get results right away. This is often part of the downstream data analytics process and not part of the upstream ETL.

ETL, on the other hand, is part of the data warehouse build process. It builds out a whole data pipeline that runs on a schedule. The ETL process grabs data from several sources, transforms it—sometimes in pretty complex ways—and stores the results for later.

That means you can fetch processed data without hammering your main systems with heavy queries.

SQL queries shine for real-time needs and straightforward tasks. ETL steps in when you need to blend data from different places, clean it up, and keep it fresh on a regular basis.

Honestly, most folks end up using both. You might write SQL queries against a data warehouse that ETL has already filled with tidy, ready-to-use data.

Thanks for reading this article about What is ETL in the Context of Data Analytics. I hope it helps you better understand the importance and value of this process.